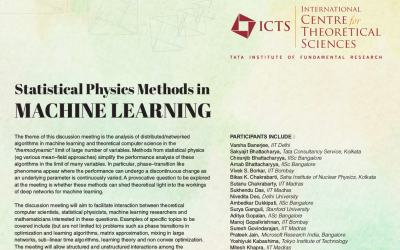

The theme of this Discussion Meeting is the analysis of distributed/networked algorithms in machine learning and theoretical computer science in the "thermodynamic" limit of large number of variables. Methods from statistical physics (eg various mean-field approaches) simplify the performance analysis of these algorithms in the limit of many variables. In particular, phase-transition like phenomena appear where the performance can undergo a discontinuous change as an underlying parameter is continuously varied. A provocative question to be explored at the meeting is whether these methods can shed theoretical light into the workings of deep networks for machine learning.

The Discussion Meeting will aim to facilitate interaction between theoretical computer scientists, statistical physicists, machine learning researchers and mathematicians interested in these questions. Examples of specific topics to be covered include (but are not limited to) problems such as phase transitions in optimization and learning algorithms, matrix approximation, mixing in large networks, sub-linear time algorithms, learning theory and non convex optimization. The meeting will allow structured and and unstructured interactions among the participants around the main theme.

*Participation is by invitation only.

icts

icts res

res in

in